For most of orchestral history, there was no conductor. Someone in the ensemble led the group while playing their own instrument. A violinist would nod to cue an entrance. A keyboard player would set the tempo from the bench.

Leadership happened from inside the performance.

Then Beethoven changed the math.

His compositions grew larger, more complex, more demanding. More instruments, more interplay between sections, more moments where the entire ensemble had to shift direction together. A violinist leading from the first chair couldn't see the full picture anymore. The music had outgrown the player-leader model.

By around 1820, a new figure appeared. Someone who stood in front of the orchestra, held no instrument, produced no sound, and shaped everything. The conductor's only tool was a baton and a point of view. Their only job was to hold the complete score in their head when every musician in the room could only see their own part.

Product leadership is going through the same split.

The Execution Surplus

The consulting firms are tripping over each other to publish the same prediction. Gartner projects that 40% of enterprise apps will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. Deloitte puts the autonomous AI agent market at $8.5 billion by that same year. McKinsey reports that high performers are three times more likely to have fundamentally redesigned their workflows around agents.

Read these reports together. Execution is becoming abundant and the bottleneck is moving.

When AI agents can write code, generate interfaces, run analyses, draft documentation, and triage customer feedback, the scarce resource is no longer the capacity to build. The scarce resource is knowing what to build, and whether what got built is any good.

The Score-Reader Problem

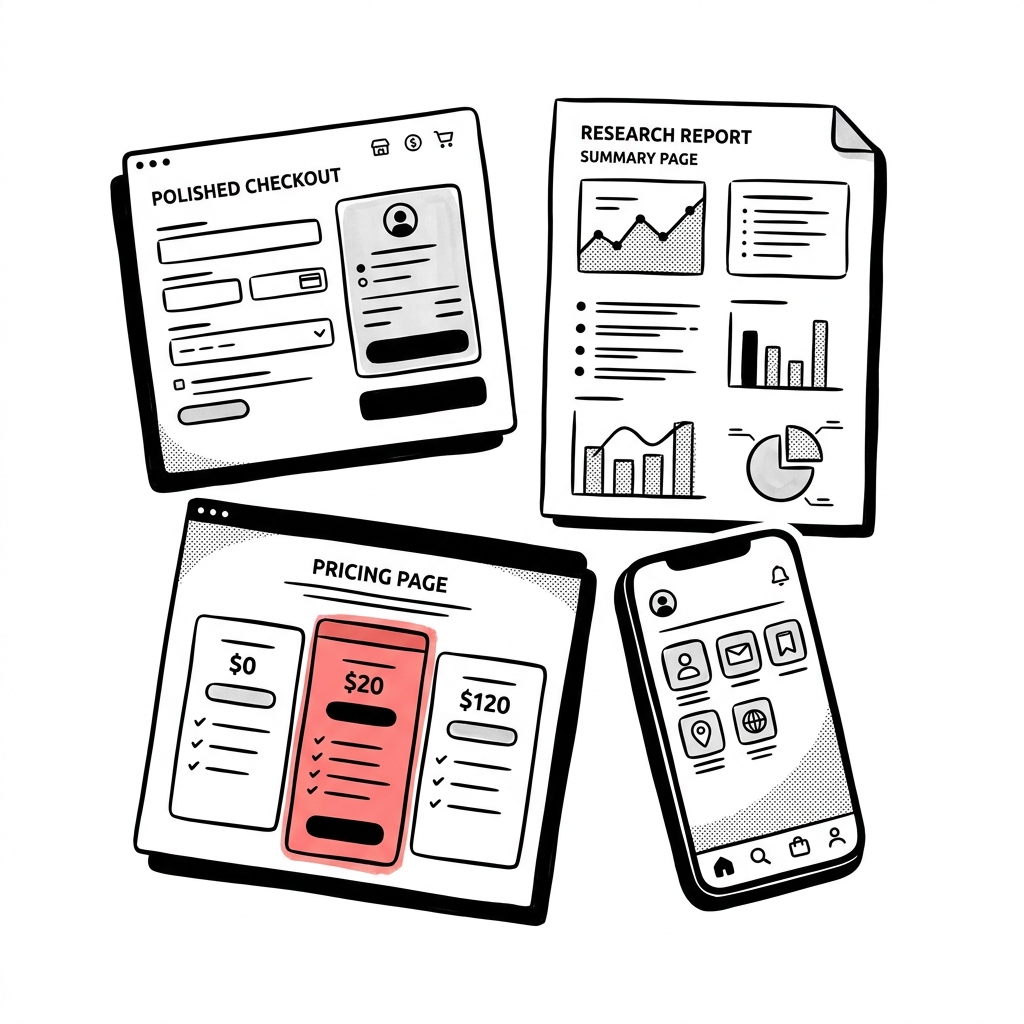

Picture a Monday morning. A coding agent has generated a working checkout flow overnight. A research agent has synthesized customer interviews and flagged that users abandon cart when shipping costs appear late. A design agent has produced three variations of a pricing page.

Each output is competent. The checkout flow works, the research synthesis is accurate, the design variations are polished.

But the checkout flow shows shipping costs on the final screen, exactly the pattern the research flagged as a dropout trigger. Two of the three design variations emphasize subscription pricing, which contradicts the company's strategic shift toward one-time purchases announced last quarter.

No individual agent got it wrong, but nobody was reading the full score.

In an orchestra, every musician sees their own part. The first violinist sees the violin line. The oboist sees the oboe line. Only the conductor sees every voice, every instrument, every dynamic marking at once, not to play better than the musicians, but to hold the complete picture when nobody else in the room can.

Product teams have the same gap. The question isn't whether each agent's output is good. It's whether they add up to something coherent.

A recent HBR piece flags the deeper problem. AI now handles "the messy, repetitive tasks that once built judgment." People with deep experience get huge productivity gains. Junior employees "often can't tell whether AI-generated work is any good or how to improve it."

When execution is distributed across agents, the ability to read the full score, to see how all the parts fit together and judge whether the whole is working, becomes the critical skill. Most organizations haven't figured out who holds it.

Intent as the New Artifact

For decades, product work organized itself around artifacts. Requirements documents, user stories, wireframes, mockups, Jira tickets. Each one was a translation layer between what someone wanted and what someone else would build.

When AI agents handle the building, those artifacts don't disappear, but their purpose changes.

A user story written for a human developer is an instruction. It describes what to build and provides enough context for a skilled person to fill in the gaps with their own judgment. A user story written for an AI agent is a constraint. It needs to encode intent precisely enough that the agent doesn't fill gaps with the wrong assumptions.

This is the same shift that happened in architecture when design briefs replaced detailed blueprints as the primary steering document. The blueprint tells you how to build something. The brief tells you what it needs to accomplish and why. When your builder is sophisticated enough to figure out the how, the what and why become the valuable inputs.

The product leader's job becomes less about specifying steps and more about specifying outcomes. Less about managing a backlog and more about defining what should exist.

That's a different discipline. A vague intent produces vague output. An ambiguous brief produces an ambiguous product. When a human developer receives a vague spec, they fill the gaps with experience and common sense. When an AI agent receives one, it fills the gaps with statistical probability. One of those tends to produce better results. And it isn't the second one.

From Process to Interpretation

In the early days of conducting, the job was mostly custodial. Keep the beat, cue the entrances, make sure the orchestra starts and stops together. Important coordination work, but fundamentally about keeping everyone in sync.

The conductor was a human metronome.

Then Richard Wagner changed the job description.

Wagner argued that conducting wasn't just coordination, it was interpretation. The conductor's role was to impose a vision on the performance, to decide what the music meant and shape every phrase, every tempo, every dynamic to serve that meaning. His 1869 essay "About Conducting" made the case that the conductor re-interprets a work, not merely executes it.

Before Wagner, the conductor managed the process. After Wagner, the conductor defined the experience.

Product leadership is going through the same upgrade. When AI agents handle increasing amounts of execution, the product leader's job shifts from orchestrating human contributors toward something harder to see and more consequential. Define intent clearly enough that agents produce the right output, then judge whether they did. Most organizations are still learning to distinguish between output that is good and output that is merely plausible.

Deloitte's data puts numbers on the gap. Only 11% of organizations have successfully deployed AI agents in production. More than 40% of agentic AI initiatives may be cancelled by 2027. Eighty percent of leaders claim mature basic automation, but only 28% claim maturity with agents.

The technology works. What's missing is someone who can read the full score.

The Sound You Don't Make

The conductor shapes everything and plays nothing. No instrument, no sound, just a clear enough interpretation of the score that a hundred musicians produce something coherent.

This makes people uncomfortable. It's easy to value the person who writes the code, designs the screen, or builds the model. Their output is visible. Put a bad conductor in front of a great orchestra and the music falls apart. The same notes, the same musicians, the same hall. The coherence disappears, and you start to understand what the conductor was actually doing.

Product leadership in a world of AI agents works the same way. The leader who defines intent clearly, judges output accurately, and holds the full picture when everyone else sees their own part will be hard to evaluate using traditional metrics. They won't have a portfolio of screens they designed or a repository of code they wrote.

Their contribution will be the coherence of the product itself.

Forrester, Gartner, McKinsey, and Deloitte are all describing the same shift. They're calling it orchestration, hybrid human-AI operating models, worker-and-process-centric design, digital workforce management.

Strip the jargon and the job is the same one that emerged in front of orchestras two hundred years ago.

Hold the full score. Interpret it clearly. Shape what you hear.

Play no instrument at all.